Insights & updates from our experts

Stop switching tabs to find context. Xurrent MCP is live.

.jpg)

🔒 A quick note on data security:> Using MCP does not mean handing your database over to an LLM. Xurrent’s IMR MCP server execute locally, and enforce your existing user permissions. Your data never leaves your infrastructure, and enterprise AI providers do not train on your API queries.

Read the Model Context Protocol architecture docs or review Anthropic’s Trust & Privacy center.

Most enterprise software in 2026 is shipping AI features and they've been pretty overwhelming. They're bolting it on. A chatbot lives next to your incident tool, a summary widget lives next to your tickets, and the AI works on whatever surface it's stuck onto, with no real access to the work happening underneath.

We don't believe in that approach.

That's why we built two MCP servers, one for Xurrent IMR and one for Xurrent ITSM. Both let you ask questions of your live operational data in plain English from inside Claude or any MCP-compatible client.

This blog is about why we shipped these the way we did, what they do, and what changes for you as a Xurrent user opening a Tuesday morning queue with 200 unread tickets.

The AI conversation has been stuck on the wrong thing

Every enterprise software vendor in 2026 is shipping AI. ServiceNow Now Assist. Atlassian Rovo. PagerDuty AIOps. Each one pitches their AI as the smartest in the room.

None of them are wrong about the AI. All of them are wrong about what makes it useful.

McKinsey put it cleanly earlier this year: "Today, AI is bolted on. But to deliver real impact, it must be integrated into core processes, becoming a catalyst for business transformation rather than a sidecar tool." Praveen Akkriraju said almost the same thing on CXOTalk two months later: "If you're just a bolt-on agent on top of software without fundamentally changing the way software interacts with data, with users, and being able to respond dynamically, then you clearly are going to lose that battle." Two senior voices, same conclusion.

There's a number that backs them up. MIT research found that 95% of AI projects never reach production. The bottleneck isn't model quality. The bottleneck is context. The data, the connections, the operational links the AI needs to actually be useful.

Here's the position we've taken. The AI in your operations is only as smart as the data it can reach. The teams winning in 2026 won't be the ones who bought the flashiest models. They'll be the ones whose AI has access to the work that's actually happening.

This is what we mean when we say AI built-in beats AI bolted-on. It should be able to give you enough context of your incidents, your tickets, your on-call rotations, your service catalog, your knowledge base, your CIs. Bolted-on means a chatbot that can answer questions about a vendor's documentation but goes silent when you ask it about your environment.

Bring your own agent and connect with Xurrent MCP

We shipped two MCP servers in the last six weeks. Both live in production today. Both built on Anthropic's open MCP standard, so you're not locked into Claude or us. Connect any MCP-compatible client and you're querying live in under five minutes.

One thing worth saying upfront: these MCP servers don't require Claude. That's the point of the standard. Cursor, GitHub Copilot, your internal agent, whatever AI client your team already uses, if it speaks MCP, it connects to Xurrent. You bring your own agent. We give it access to your operational data. No lock-in, no proprietary wrapper, no "our AI only." If your engineering team wants to build a custom agent on top of your incident data, the MCP server is the interface. If your IT team wants to wire Xurrent into a broader agentic workflow, same story. The standard is open. The connection is yours to use however you need.

Xurrent IMR MCP

The IMR MCP server gives Claude access to your live incident data. Open incidents, on-call schedules, escalation policies, timelines, alerts, all queryable in plain English. No SQL. No dashboards. No copy-paste from Slack threads.

What an SRE actually asks during a live incident:

- "Walk me through the timeline of incident #75."

- "Who's on call for SRE right now and what's their handoff?"

- "Find any incidents related to Grafana alerts in the last week."

- "Pull on-call load by team member, I have a QBR in 30 minutes."

Three queries that took beta users five tabs and twenty minutes now take one prompt and ten seconds.

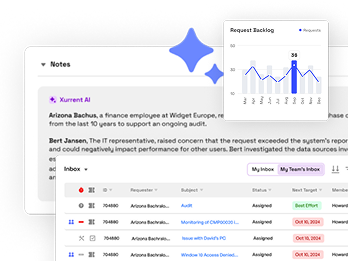

Xurrent ITSM MCP

The ITSM MCP server goes further. It doesn't just read your service operations data. It can act on it. Create requests, update ticket status, add notes, close resolved items, search knowledge, query CIs. The Service Desk Manager, the ITSM admin, and the L1/L2 specialist each get plain-English access to their data and the ability to move work forward without opening the tool.

What that looks like in practice:

- "Show me all open requests for Marketing, sorted by SLA risk."

- "Create a request for VPN access for new hire Jordan Lee, assign it to the IT Access team."

- "Mark request #4521 as resolved. Add a note: HubSpot integration restored after cache flush."

- "Find knowledge articles related to Cisco AnyConnect on macOS, and link the top result to request #4503."

Reading is useful. Acting is useful faster. The ITSM MCP does both, with a full audit trail behind every action the AI takes on your behalf.

Your data stays in Xurrent: How MCP handles security

If you are an engineering leader or a CISO reading this, your first thought is likely: "There is no way we are handing our live incident and ticket databases over to a third-party LLM."

We agree. That’s exactly why we built this using the Model Context Protocol (MCP) rather than building a custom integration that syncs your data into an AI provider's cloud.

Here is how the architecture actually protects your environment:

- The data never moves: You aren't syncing, duplicating, or uploading your database to Claude. The MCP server acts as a strictly defined API bridge. Claude asks a specific question, reads the response in real-time, and that's it. Your data stays entirely inside Xurrent.

- Permissions map to the human: The AI doesn't get "god-mode" access to your instance. It queries data using the exact authorization of the person asking the question. If a user asks Claude to summarize an HR ticket they don't have Xurrent permissions to view, the MCP server blocks it.

- Zero model training: When using enterprise AI clients, your operational data is never used to train their underlying models. It exists in memory to answer your immediate prompt, and then it's gone.

It’s less like handing an AI the keys to your filing cabinet, and more like giving an assistant strict, supervised access to look at one specific file, read it aloud, and immediately put it back.

Fast context beats autonomous resolution

If you read the day-in-the-life carefully, you noticed something. None of those moments required a "smart" AI. Claude is a general-purpose model. What made it useful was the context it could reach.

This is the actual job AI does well in operations right now. Heinrich Hartmann, who has been writing about AI in SRE longer than most, put it cleanest. AI's most valuable role isn't autonomous remediation. It's giving the engineer the context they need to fix things fast.

Fred Hebert, a few weeks earlier in SRE Weekly, noticed the related framing problem. AI coding tools are sold as partners that augment engineers. AI SRE and ITSM tools are sold as replacements for low-value work. The marketing language is the tell. It says how decision-makers see the role.

We don't see incident response or service operations as low-value work. We see them as context-heavy work. The job isn't routine. The job is figuring out, in the first 30 seconds, what's actually happening, where, who's affected, what changed recently, what to try first. By the time the engineer has the context, the actual fix is often the easy part.

Every minute spent gathering context across five tools is a minute the incident continues, the customer waits, or the SLA ticks closer to breach. AI that helps gather context is high-impact. AI that tries to take over the resolution layer creates the AI babysitting toil the Runframe 2026 report named, where 42% of enterprises with AI in incident management report higher human oversight costs than before adoption.

The metaphor we like is Informatica's. MCP is "USB-C for AI." A standard plug that any AI client can use to connect to any data source. The connector is universal. The data on the other end is yours. The intelligence happens on top of both.

What else we shipped this quarter

We talk a lot about MCP because it's the most visible expression of the philosophy. But the philosophy applies to everything we shipped this quarter. Every release was built around the same idea. Here's what we've shipped this quarter (a lot more than just this):

The pattern across everything we shipped this quarter is that nothing requires a separate AI license. Nothing requires a separate add-on. Nothing requires a separate vendor contract. The AI is part of the platform you're already paying for. The MCP servers are part of the platform you're already running. We're not building an AI product on the side. We're building one product that works the way modern engineering teams already work.

What good AI in your operations actually looks like

The MCP servers are live. The day-in-the-life is happening in customer environments today, not someday. The philosophy is shipping in code, not slides.

If you're picking AI tools for your service operations or your incident response in 2026, here's the question worth asking. Not "whose AI is smarter." Not "whose model has the most parameters." Ask: whose AI gets access to my real data, on my terms, with the audit trail my compliance team needs.

The vendor whose answer to that question is a working MCP server, two of them in our case, is the vendor whose AI will actually be useful next year. The vendor whose answer is a proprietary chatbot you can't extend, can't audit, can't connect to your other tools, is the vendor whose AI will look impressive in a demo and quiet in a real outage.

We want you to spend less time switching tabs and more time fixing what's actually broken. Less time hunting for context, more time using it. Less time being sold somebody else's AI, more time using yours.

Connect your Xurrent IMR or Xurrent ITSM account to Claude in under five minutes. Ask it something real. See what your data looks like coming back.

Rohan Taneja is the Product Marketing Manager at Xurrent. With a career built at the intersection of site reliability and product strategy, Rohan focuses on how emerging protocols like MCP can bridge the gap between "black box" AI and the high-stakes reality of modern ITOps.

.webp)

%20(1).webp)

.jpg)